The 90-Day Hypercare Model: Why Post-Launch Support Is the Difference Between Systems That Work and Systems That Die

27 March 2026 • By Jakub Cambor, Founder of AI for Marketing | Top 1% Upwork Expert Vetted Talent

Last updated: 27 March 2026

The business world is currently saturated with a dangerous misconception about artificial intelligence. Business leaders, founders, and marketing directors are investing heavily in automated solutions, operating under the assumption that AI is a magic wand. They believe that once the code is written and the application programming interfaces are connected, the system will run flawlessly in perpetuity. This fundamental misunderstanding is the primary reason why so many expensive technological investments fail within their first quarter of operation.

Most AI implementations do not fail because the initial architecture was flawed or the strategy was misguided. They fail because the build was treated as the finish line. When businesses treat complex, autonomous workflows like traditional static software, they invite operational disaster. Artificial intelligence is not a static entity. It is a dynamic, highly sensitive ecosystem that interacts with unpredictable human inputs, shifting search engine algorithms, and constantly updating language models.

Day one always looks impressive: workflows are live, automations trigger, content drafts appear, reporting dashboards fill up, and leadership gets the reassuring sense that the business has officially adopted AI. Then reality arrives. A data source changes format. An API response becomes inconsistent. A prompt that was stable in staging produces a subtle change in tone in production. Deliverability dips as volume ramps up. A new edge case appears that was never in the test set. The system still runs, but the output quality degrades, the team loses confidence, and usage quietly declines. That is the exact moment systems die. They do not die with a dramatic outage, but with a slow, silent loss of trust.

At AI for Marketing (AfM), we operate on a fundamentally different philosophy. We build systems designed for the Bionic Marketer. In this paradigm, artificial intelligence serves as a powerful exoskeleton. It amplifies human capability, accelerates content production, and scales data analysis. However, just like any advanced piece of machinery, this exoskeleton requires strict calibration, continuous monitoring, and expert oversight to function safely and effectively. It complements human expertise rather than replacing it.

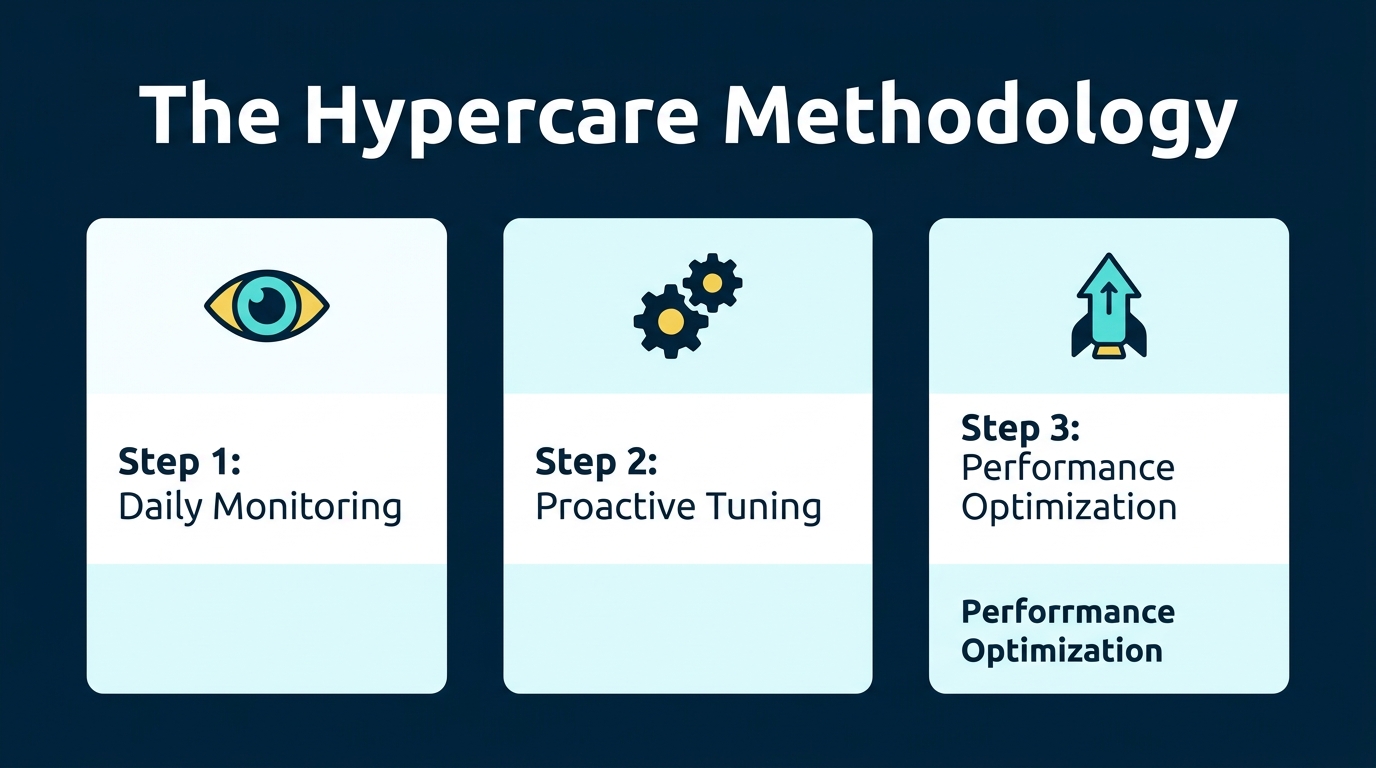

This brings us to the most critical, yet frequently overlooked, phase of any technological deployment. Comprehensive AI system post-launch support maintenance is the definitive bridge between a fragile technological experiment and a robust, revenue-generating business asset. Without it, even the most elegantly designed workflows will inevitably degrade. Our answer is the 90-Day Hypercare Model: an intensive post-launch period built to stabilize autonomous systems in the real world, where inputs are messy, platforms evolve, and performance must be proven under live load.

The "Set It and Forget It" Myth: Why Unmonitored AI Systems Fail

To understand the necessity of intensive post-launch support, we must first dismantle the illusion of autonomous perfection. The idea that AI automation can be launched and then left alone is appealing, especially to teams already stretched thin. It is also the fastest route to disappointing results.

AI-enabled marketing systems sit at the intersection of multiple moving parts: model behavior, prompt logic, data pipelines, channel policies, deliverability constraints, and human review workflows. Each component can change independently. Without structured post-launch monitoring, small issues compound until the system becomes unreliable. Here are the most common failure points we see when organizations skip true post-launch support.

1. API Drift and Provider Updates

The core engine of any modern marketing automation system relies on application programming interfaces connecting to large language models like OpenAI, Anthropic, or specialized data providers. These foundational models are not static. The companies developing them frequently update their underlying architecture, adjust their safety filters, and modify their contextual understanding protocols.

This phenomenon is known as AI API maintenance failure, or API drift. A carefully engineered prompt that generated perfectly formatted, brand-aligned social media copy in January might yield entirely different, erratic results by March due to an unannounced background update by the model provider. The result is rarely a visible error code. More often, it is a subtle change in output behavior:

- • Output becomes significantly more verbose or overly cautious.

- • Formatting changes break downstream parsing tools and CRM integrations.

- • Tone shifts away from the established brand voice.

- • The model follows complex, multi-step instructions less consistently.

- • Tool calls become highly unreliable in edge scenarios.

In marketing systems, subtle change is expensive. A small reduction in relevance can lower conversion rates. A slight change in compliance behavior can introduce brand risk. This is why API maintenance cannot be treated as a one-time integration task. It is a strict operational discipline.

2. The Edge Case Dilemma in Live Environments

Pre-launch testing is a controlled environment. Engineers and marketers can simulate thousands of interactions, but they can never account for one hundred percent of real-world anomalies. When an automated workflow meets the public, it encounters edge cases. These are highly specific, unanticipated user inputs or data irregularities that the system was not explicitly trained to handle.

In production, your systems will inevitably encounter unusual customer queries, CRM records with missing fields, product names that overlap with competitor terms, regional language variations, and unexpected seasonal intent in search and social channels. AI systems are excellent at handling variation, but only when they have been designed with robust error handling, guardrails, fallbacks, and escalation paths.

When deploying a sophisticated Multi-Agent Engine, the risk of compounding errors increases exponentially without strict oversight. If one agent misinterprets an edge case, it passes flawed data to the next agent in the sequence, creating a cascade of operational failures. Proactive monitoring catches these edge cases in real-time, allowing engineers to update system instructions and ensure the agent learns how to navigate that specific anomaly in the future.

3. AI Performance Drift and Brand Voice Dilution

Artificial intelligence is highly sensitive to the context window and the data it processes over time. AI performance drift occurs when an automated system slowly deviates from its original parameters. In marketing, this usually manifests as brand voice dilution. An automated content engine might start using vocabulary that is slightly too academic for your target audience, or a localized campaign might lose its regional nuance.

Drift is especially common when systems are built quickly using basic prompts and then left alone. Over time, teams stop reviewing every output. The AI starts to shape the brand voice instead of supporting it. Outputs gradually move closer to being almost acceptable rather than remaining on-brand and precise. Precision-engineered systems require human experts to regularly review the outputs, score the quality, and recalibrate the foundational instructions to ensure the brand voice remains sharp and authoritative.

4. Silent Failures and Brand Damage

Many AI workflows fail silently. A scheduler still runs, a webhook still fires, a spreadsheet still fills up. The technical infrastructure appears healthy, but the commercial outcomes degrade rapidly. Outreach sequences trigger but book fewer calls. SEO pages publish but do not rank as expected. LinkedIn posts go out but engagement drops to zero. Reporting pulls partial data and leadership decisions are made on incomplete numbers.

Without active post-launch AI monitoring, the business notices the failure only after the quarterly results drop, and by then you are debugging under immense pressure while trying to recover lost revenue.

What is Hypercare? The 90-Day Gold Standard for AI Stability

Hypercare is an intensive, proactive support phase that begins the moment a new technological ecosystem goes live. It is not merely a reactive helpdesk service where engineers wait for the client to report a broken feature. Instead, it is a period of heightened surveillance, rapid iteration, and aggressive optimization. The goal of this phase is to act as a safety net, absorbing the shock of live deployment and ensuring the system adapts seamlessly to real-world operational demands.

In the broader technology sector, particularly in enterprise software, the concept of hypercare support phases is well-documented as the critical window for user adoption and system stabilization. We have adapted and enhanced this enterprise-grade methodology specifically for automated marketing infrastructures. In AI, hypercare is even more important because working is not a binary state. Quality is a spectrum, and the goal is not only technical uptime but consistent, on-brand, revenue-aligned performance.

Why 90 Days is the Gold Standard

Thirty days can catch obvious breakages, but ninety days stabilizes autonomous systems. A 90-day window is the scientifically and operationally proven standard because it provides enough runway to:

- • Capture a meaningful range of audience behavior patterns.

- • Observe channel variability and engagement fluctuations.

- • Identify rare edge cases that only appear with high volume.

- • Tune sequences based on statistically significant performance data.

- • Manage API drift and provider changes that occur mid-cycle.

- • Transition from manual supervision to automated alerts with absolute confidence.

It is also short enough to keep urgency high. Hypercare is not a slow retainer with vague outcomes. It is a focused engineering and operations program designed to turn a launch into a durable, compounding asset.

Phase 1: Days 1-30 (Stabilization and Daily Monitoring)

The first thirty days following deployment are the most volatile. The system has just been introduced to live data, real customers, and live API environments. Our primary objective during Phase 1 is stabilization. We operate on high alert, treating the system with the same level of scrutiny as a critical financial infrastructure.

This is where most teams discover the vast gap between what worked in testing and what works in the live business environment. During this window, our engineering team and your dedicated account manager conduct daily audits of the system outputs.

Daily Monitoring Protocols

- • Pipeline Integrity and Data Flow: We verify that inputs are arriving on time, fields are complete and correctly mapped, enrichment steps are returning consistent formats, and there are no unexpected changes in CRM schemas or naming conventions.

- • Output Correctness and Brand Alignment: We check if the tone matches the brand voice precisely. We ensure claims are verifiable and compliant, calls-to-action are appropriate to the funnel stage, and we actively hunt for repetitive phrasing or template-like content patterns.

- • Error Handling and Fallbacks: We monitor whether failed steps are retried correctly, timeouts escalate properly, exceptions are logged with enough context to fix quickly, and safe defaults trigger when data is missing.

Operationally, this period aligns with what many teams describe as the initial friction window. The immediate post-go-live hypercare period is where the vast majority of critical system friction is identified and resolved. By catching these issues internally, we prevent them from ever impacting your end-users or your bottom line. We handle all error logging, payload inspections, and immediate hotfixes.

By the end of Phase 1, your system will be stable under normal daily usage, producing outputs that require decreasing human correction, and protected by basic guardrails that prevent obvious brand risk.

Phase 2: Days 31-60 (AI Sequence Tuning and Performance Optimization)

Once the system is stabilized and critical errors have been engineered out of the workflow, we transition our focus from basic functionality to high-level optimization. Phase 2 is about answering a different question: The system works, but is it operating at peak commercial efficiency?

This phase involves deep AI sequence tuning. We analyze the aggregated data from the first thirty days to identify bottlenecks and areas for improvement. AI sequence tuning is the structured refinement of prompt logic, instruction hierarchy, tool usage patterns, step ordering, gating rules, and confidence checks.

Many teams assume prompts are static. In reality, prompts are exactly like direct response copy: they must be tested, refined, and aligned to audience response.

Performance Tuning for Content and Search

If the system includes an automated content workflow, Phase 2 is where you align production with live intent. Search intent changes, search engine results page layouts shift, competitor content evolves, and platform interpretations of helpful content continue to tighten. That means AI-generated content needs more than grammatical perfection. It needs strategic accuracy.

For instance, an automated SEO Engine requires continuous tuning during this phase to ensure the generated content aligns perfectly with shifting search intents, internal linking patterns, and the nuance of how your product should be positioned in the market against emerging competitors. We refine the prompts based on live engagement metrics, ensuring that the outputs are not just accurate, but commercially effective.

Performance Tuning for Executive Presence

For social media and thought leadership, the risk is different. The risk is not only generic content, but content that feels subtly inauthentic. Hypercare tuning focuses on point-of-view consistency, executive voice patterns, and structures that drive engagement without resorting to gimmicks. We build guardrails against overclaiming or generating shallow hot takes. When done properly, AI reduces the time cost of showing up consistently without turning your leadership presence into a robotic feed.

By the end of Phase 2, your system will be measurably improving output quality, producing fewer revisions per asset, delivering faster turnaround times, and operating with tuned prompts based on real market data.

Phase 3: Days 61-90 (Autonomy and Deliverability Management)

The final thirty days of the Hypercare model are focused on long-term sustainability and the gradual release of control. The system has been stabilized, tuned, and optimized. Now, we must ensure it can run autonomously with minimal daily intervention while maintaining strict quality standards.

Autonomy does not mean walking away. It means the system is reliable enough that humans can move from daily intervention to exception-based oversight. This is also where many AI marketing systems either scale safely or break under volume.

Deliverability Management at Scale

If your AI system includes outbound email, nurture sequences, or automated follow-up, deliverability is not a technical footnote. It is the entire channel. Generating thousands of hyper-personalized emails is a technical marvel, but it is entirely useless if those messages hit the spam folder.

As automated systems scale their output, they risk triggering spam filters or platform shadow-bans. Inbox providers respond aggressively to sudden send spikes, repetitive templates, low engagement rates, and poor list hygiene. AI can accelerate outreach, but it can also accelerate mistakes.

Our hypercare addresses this with strict deliverability oversight. We establish warm-up and ramp logic aligned to mailbox behavior. We engineer content variability that stays on brand while avoiding repetitive patterns. We monitor engagement, set up suppression rules, and adjust segmentation based on reply quality rather than just volume. We ensure that your AI exoskeleton operates within the speed limits of the digital ecosystem.

Operational Hardening

By Days 61-90, the work includes refining runbooks so your team knows exactly what to do when something changes. We set up alert thresholds that reflect business impact, define escalation paths, confirm security controls, and validate the system against updated key performance indicators.

By the end of day 90, the client possesses a precision-engineered marketing engine that is fully integrated into their business strategy, protected against immediate technical decay, and primed for scalable growth.

Core Components of Effective AI System Post-Launch Support Maintenance

The phrase AI system post-launch support maintenance is often used as a catch-all by software vendors. In practice, effective maintenance has highly specific components, each tied directly to risk reduction and performance protection. The AfM Hypercare model is a comprehensive, done-for-you service designed entirely around the needs of the overwhelmed pragmatist. We remove the technical complexity so you can focus purely on strategic growth.

Below are the pillars we treat as non-negotiable during our maintenance phases.

1. Unified Operational Management and Billing

One of the most significant operational hurdles for businesses adopting artificial intelligence is the fragmentation of the software ecosystem. Building a custom marketing engine often requires subscriptions to OpenAI for text generation, Anthropic for complex reasoning, vector databases for memory, and workflow routing tools. Managing multiple API keys, tracking token usage across different platforms, and dealing with unpredictable metered billing creates immense administrative anxiety.

Our service model eliminates this friction entirely. We handle the complex backend of token arbitration and API management. Clients receive a unified, predictable billing structure. You do not need to worry about hitting rate limits during a high-traffic campaign or managing separate developer accounts. We aggregate the technology, absorb the infrastructure complexity, and deliver a clean, consolidated service.

2. Deep Observability and System Health

AI workflows require massive visibility across inputs, transformations, outputs, error frequencies, and latency limits. This is not about vanity dashboards. It is about diagnosis speed. When an issue occurs, you need to know exactly what changed, where it changed, and what the business impact is.

Ongoing monitoring and alerts must be engineered deeply into the system, supported by a dedicated operational layer. Comprehensive System Health monitoring is the backbone of our maintenance strategy, ensuring uptime, precision, and proactive intervention rather than relying on reactive support tickets.

3. Proactive Fixes and Error Handling

The hallmark of premium AI system post-launch support maintenance is proactivity. Traditional IT support operates on a break-fix model: the client notices a problem, submits a ticket, and waits for a resolution. In the context of automated marketing, a break-fix model is financially disastrous. If a lead generation agent breaks on a Friday evening, waiting until Monday for a fix means losing a weekend of potential revenue.

Our systems are built with internal diagnostic tools. Proactive fixes include adjusting prompts when output variance increases, updating API handling when providers change policies, tightening guardrails when new edge cases appear, and patching parsers when downstream tools change. In most cases, our engineers diagnose, rewrite, and deploy a fix before the client is even aware that an anomaly occurred.

4. Human-in-the-Loop Design

The strongest AI systems in marketing are not the most autonomous. They are the most well-calibrated. Hypercare clarifies exactly what should be fully automated, what should be suggested and then approved, what requires expert judgment every single time, and where the AI should stop and ask for human clarity. This is what Bionic Marketing looks like in practice: extreme speed with unyielding standards, and high autonomy with strict accountability.

The Cost of Neglect vs. The ROI of Hypercare

When evaluating the necessity of AI system post-launch support maintenance, business leaders must shift their perspective from cost-avoidance to risk-reversal. Investing in a custom marketing automation build without securing a dedicated maintenance protocol is akin to purchasing a high-performance sports car and refusing to pay for oil changes. The initial thrill of speed will inevitably be followed by a catastrophic engine failure.

The True Cost of Neglect

The cost of neglect is rarely the cost of the rebuild. It is the compounding waste of resources. When an AI system is left unmonitored after deployment, organizations pay heavily in several ways:

- • Lost Team Time: People stop trusting the system, abandon the tools, and revert to manual work.

- • Brand Risk: Off-tone content, incorrect claims, or aggressive messaging slips through to valuable clients.

- • Missed Pipeline: Sequences run but lead quality drops as the AI misinterprets intent.

- • Channel Damage: Deliverability declines, engagement signals weaken, and domains get blacklisted.

- • Decision Risk: Reporting becomes unreliable, leading to misallocated advertising spend.

The most expensive AI failure is the one that looks like it kind of works. That is how initiatives stall, budgets get cut, and leadership concludes that AI was not worth the investment.

The Compounding ROI of Hypercare

Conversely, the return on investment generated by the Hypercare model is compounding. Understanding why hypercare matters is fundamentally about protecting your initial capital investment and guaranteeing ROI. A properly maintained system becomes more efficient over time.

As our team tunes the sequences and refines the prompts based on live data, the cost per acquisition drops, the content quality rises, and the operational bandwidth of your human team expands dramatically. Higher reliability reduces internal resistance. Faster iteration improves performance earlier. Reduced manual review saves time without lowering standards.

A cheap AI tool can produce output. A well-supported, precision-engineered AI system produces commercial outcomes repeatedly. When AI system post-launch support maintenance is executed properly, you gain compounding content production with stable quality, repeatable workflows that survive staff changes, and a marketing team that moves exponentially faster without losing their strategic judgment. That is what Agency-as-a-Software is meant to deliver: not a temporary novelty, but permanent operational infrastructure.

Frequently Asked Questions (FAQ) About AI System Maintenance

What is AI system post-launch support maintenance?

AI system post-launch support maintenance is the intensive, ongoing monitoring, tuning, and operational management required immediately after deployment. It keeps AI workflows stable, accurate, and aligned with business goals by handling errors, optimizing performance, and safeguarding against drift caused by changing data or model behavior.

Why do AI marketing systems need a 90-day hypercare period?

A 90-day window is the operational gold standard because it provides enough live operating time to surface rare edge cases, measure performance under real market conditions, and tune sequences based on actual outcomes. It thoroughly stabilizes the technology so it can run autonomously without constant daily intervention.

How does API drift affect my automated marketing workflows?

API drift occurs when underlying language models update their internal architecture or safety filters in the background. Without ongoing maintenance, these invisible updates can cause your previously stable prompts to generate erratic, off-brand, or entirely broken content, silently damaging your marketing performance over time.

What happens if an AI system is left unmonitored after deployment?

Unmonitored systems inevitably suffer from performance degradation. They will encounter unanticipated user inputs they cannot process, leading to broken lead flows, hallucinated responses, diluted brand messaging, and ultimately, a complete failure of the automated infrastructure as the team loses trust in the outputs.

Does AI for Marketing handle the technical maintenance of my custom AI agents?

Yes. Our done-for-you service model includes comprehensive hypercare and ongoing support. We manage the API connections, perform daily system health checks, tune the automated sequences, and consolidate all token billing, allowing you to focus entirely on scaling your business without touching a single line of code.

Want to build marketing systems like this?

Book a Discovery CallRelated Articles

AI Email Marketing: Beyond Drip Sequences

AI email marketing moves beyond basic rule-based automations to intelligent systems that personalise content, optimise send times, generate copy, and adapt sequences based on recipient behaviour in real time.

Read more →

AI Marketing Strategy Framework (2026)

An AI marketing strategy is a structured plan for integrating artificial intelligence into your marketing operations. This framework covers the maturity audit, prioritisation, the build sequence, KPIs, and common strategic mistakes.

Read more →

AI Content Creation: Scale Without Losing Quality

AI content creation is the use of artificial intelligence to research, draft, edit, and optimise marketing content. Learn how to scale production with quality gates, brand DNA frameworks, and workflows that keep the human where it matters.

Read more →